Photo Location Confidence Score: How to Read It

Why a confidence score is useful only when you read the evidence behind it

A confidence score can save time, but it can also mislead people who read it as a final verdict. In photo geolocation, the number is only useful when it is read beside the clues that produced it.

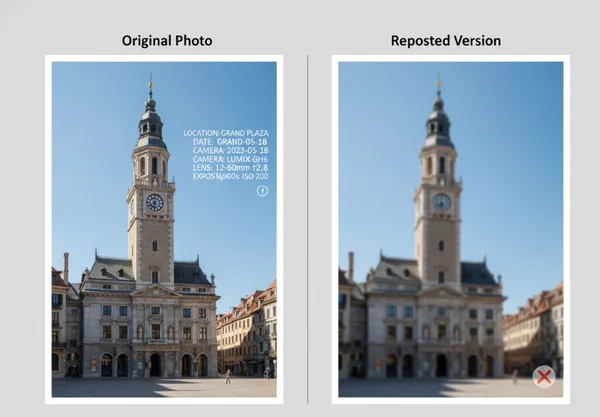

That matters because users bring very different files into the same system. One upload may be an untouched phone photo with GPS tags and a clear landmark. Another may be a reposted image with cropped edges, missing metadata, and only a few visual hints left.

The best way to use the platform is as a photo verification workflow, not as a black-box answer machine. The score helps summarize evidence strength, but the reasoning panel, map view, and visible clues are what tell you whether the result deserves trust.

What a confidence score can actually measure

How EXIF, GPS tags, and image quality shape the starting evidence

A confidence score usually rises when the starting evidence is strong. The Library of Congress Exif format guide says the Exif family stores structured metadata inside image files, including camera settings, time details, geographic data, and thumbnails. The same guide says GPS support arrived in Exif 2.0 and was improved in 2.2, which is why an original file may already contain machine-readable location fields.

That kind of metadata gives the system a head start. If the photo also has good resolution, readable text, or a recognizable skyline, the score is not just reacting to one clue. It is reacting to multiple layers of evidence that point in the same direction.

Why exported copies, reposts, and screenshots weaken the score

The opposite is also true. A shared or edited copy often starts with less evidence than the original file. Apple says location coordinates can be embedded in a photo when camera location access is enabled. Its desktop Photos app also includes an "Include location information" option during sharing or export. That means one version of the image may still hold location data while another version of the same scene may not.

Once that embedded data is gone, the score has to lean more heavily on visible clues. A screenshot may still show a station sign or a mountain outline, but it usually leaves more room for doubt. This is why weak files should be read as clues to investigate, not as proof to repeat without checking.

Three result scenarios readers should interpret differently

High score with strong metadata and visible landmarks

This is the cleanest case. The file keeps rich metadata, the scene contains a distinct place marker, and the result fits what the map shows. In this situation, a high score is meaningful because the system is not guessing from one thin hint.

Even then, the number should be read with the explanation. A strong result should show agreement between metadata, visible landmarks, and the reasoning panel. If those elements reinforce each other, the score is doing its job well.

Mid-range score with partial clues and room for doubt

This is often the most realistic scenario for users who work with reposts, exports, or old files. The image may still have enough context to narrow the location, but some evidence is missing or ambiguous. A middle score usually means the system found something useful, yet still saw competing possibilities.

This is the point where users should slow down. Look for whether the reasoning cites specific details such as road signs, architectural features, terrain, or language. If the score sits in the middle but the explanation feels thin, the result should stay provisional.

Low score on weak or heavily shared images

A low score does not always mean the tool failed. Sometimes it means the image itself is weak. Heavy compression, tight crops, low light, or repeated sharing can strip away the clues that make precise location work possible.

In this case, the result may still be useful as a starting lead. A low score can tell you which region to review first, which visible clues deserve more attention, or whether the image needs a better source file. That is still valuable, especially when the alternative is no structured lead at all.

How to use the reasoning panel with the map and coordinates

Signals that support the result

The strongest results show an evidence chain, not just a number. The explanation should point to identifiable clues, and those clues should fit the map and coordinate output. If the platform highlights a train station sign, road layout, and mountain line, the map should place the result in a place where those features make sense together.

This is why the map and coordinate view matters. A confidence score becomes much more useful when the surrounding evidence is visible enough for a human reviewer to challenge or confirm it.

Signals that should make you pause

Some results deserve a second look even when the score seems respectable. Pause when the reasoning stays vague, when the map placement looks broader than the text implies, or when the explanation depends on one weak clue. A single language hint or one skyline shape is rarely enough by itself.

Users should also pause when the real-world stakes are high. If the image is being used in a newsroom, an investigation, or any public claim about where an event happened, treat the score as one part of the review process. Do not treat it as the whole process.

What not to claim from a confidence score alone

Why a score is not proof by itself

A confidence score is not proof because the underlying evidence has limits. GPS.gov accuracy guidance says GPS-enabled smartphones are typically accurate within a 4.9 meter, or 16 foot, radius under open sky. It also says user accuracy depends on signal blockage, atmospheric conditions, satellite geometry, and receiver quality.

That matters even before visual reasoning enters the picture. If basic location data can shift with environment and device conditions, then a confidence score should never be treated like a courtroom-style certification. It is better understood as a summary of how strongly the available clues support one location over others.

When manual verification still matters

Manual review still matters whenever the claim carries consequences. Journalists, researchers, and investigators should compare the reasoning with public map context, local landmarks, timeline fit, and the known source of the image. Everyday users should do the same when the result feels surprising or when the image came from a repost rather than an original file.

The most useful workflow is to move from score to explanation, then from explanation to map review, and then to any outside checks that the situation requires. That is where a location analysis dashboard becomes more useful than a simple yes-or-no location guess.

What to do next before you trust a location result

Start with the file itself. Prefer the original image when possible. Look for metadata, readable text, distinct landmarks, and wide scene context before you give too much weight to the score.

Then review the result in layers. Check the reasoning, compare it with the map, and ask whether the visible clues actually support the named place. If the evidence stack feels thin, keep the result provisional.

The safest habit is simple: trust the score only when the rest of the evidence keeps agreeing with it. That approach makes AI geolocation faster without turning it into false certainty.